Crawl Budget Optimization Guide 2026: Ensure Googlebot Efficiently Crawls Your Site

Crawl Budget Optimization Guide 2026: Ensure Googlebot Efficiently Crawls Your Site

Among the most critical yet frequently overlooked aspects of technical SEO is crawl budget optimization. For websites with thousands or millions of pages, how efficiently Google allocates its crawling resources to your site can make or break your organic visibility. No matter how outstanding your content is, if Googlebot cannot discover and index your pages, they will never appear in search results.

In this guide, we will explore the crawl budget concept in depth, examine all the factors that influence it, and provide actionable optimization strategies you can implement in 2026.

What Is Crawl Budget?

Crawl budget refers to the number of pages Google is willing and able to crawl on your site within a given time period. This concept is composed of two fundamental components that work together to determine your actual crawl allocation.

Crawl Rate Limit

The crawl rate limit defines the maximum number of simultaneous connections and requests Googlebot can make to your server without causing performance degradation. Google dynamically adjusts this limit based on your server's responsiveness. When your server responds quickly and reliably, Google increases the crawl rate. Conversely, if your server starts returning errors or slowing down, Google automatically throttles back to avoid overwhelming your infrastructure.

Crawl Demand

Crawl demand represents how much Google wants to crawl your site. This demand is driven by several factors including the popularity of your URLs, how frequently your content changes, and how stale your indexed pages have become. Pages that receive numerous external links or generate significant search traffic naturally attract higher crawl demand.

Your effective crawl budget is the intersection of these two components. Google considers both your server's capacity and the perceived value of crawling your content to determine how many resources to allocate to your site.

Why Crawl Budget Matters

For small to medium websites with a few hundred pages, crawl budget rarely presents a problem. Google can easily crawl these sites without any resource constraints. However, crawl budget becomes critically important in several scenarios.

Large-scale websites with tens of thousands to millions of pages face inherent crawl budget challenges. An e-commerce site where every product variant, filter combination, and pagination page creates a unique URL can easily generate hundreds of thousands of URLs. Google must crawl all of these within limited resources.

Frequently updated sites such as news portals, content platforms, and blogs that publish dozens or hundreds of new pieces daily need rapid discovery and indexing. If crawl budget is inefficient, new content may take days or weeks to appear in search results.

Sites undergoing major changes like migrations, redesigns, or bulk content additions are particularly vulnerable to crawl budget issues. When you add thousands of new product pages to an e-commerce store, those products depend on crawl budget for discovery and indexing.

Technically problematic sites waste crawl budget on low-value URLs, causing Googlebot to spend time on meaningless pages instead of your valuable content. This results in indexing delays and organic traffic losses.

SEOctopus's Technical SEO Audit module provides a comprehensive analysis of your site's crawlability, identifying issues that waste crawl budget and prioritizing fixes based on impact.

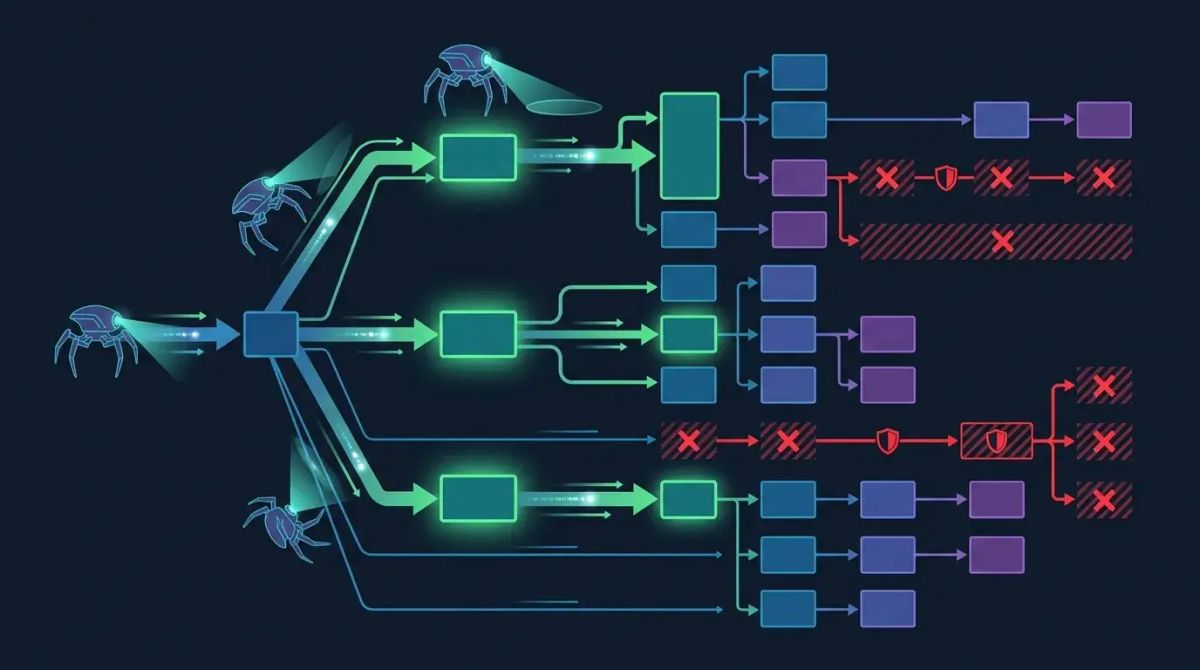

How Googlebot Crawls Your Site

Understanding Googlebot's crawling process is fundamental to effective crawl budget optimization. The crawling pipeline operates through several stages.

First, Googlebot maintains a crawl queue populated from previously known URLs, XML sitemaps, and newly discovered links. This queue is ordered by priority, with important and frequently changing pages receiving higher priority. When Googlebot requests a page, it evaluates the server's response code, response time, and content. After a successful response, it extracts all links from the page and adds qualifying URLs to the crawl queue. Throughout this process, Googlebot respects your robots.txt directives.

Modern Googlebot can also process JavaScript content. However, JavaScript rendering requires a separate rendering queue and additional computational resources. This two-phase crawling and rendering pipeline means JavaScript-heavy sites experience longer discovery-to-indexing times.

Factors That Waste Crawl Budget

Identifying and eliminating crawl budget waste is the first step toward optimization. Several common issues consume crawling resources without providing any value.

Duplicate Content and Duplicate URLs

When the same content is accessible through multiple URLs, Googlebot wastes resources crawling identical pages. Common culprits include www versus non-www variants, HTTP versus HTTPS versions, trailing slash inconsistencies, and case-sensitivity differences. Implementing proper canonical tags and 301 redirects resolves these issues and consolidates crawling signals.

Parameter URLs

On e-commerce sites, filtering, sorting, and pagination parameters can generate thousands of unnecessary URLs. A single category page with color, size, price range, and sort order parameters can produce hundreds of unique URL combinations. Each of these appears as a separate page to Googlebot, consuming valuable crawl resources for essentially duplicate content.

Infinite Crawl Traps

Calendar modules, session-based URLs, and infinite scroll implementations can trap Googlebot in endless loops. For example, a calendar widget with next month and previous month links can lead Googlebot through thousands of irrelevant date-based URLs. Similarly, infinite scroll without proper HTML fallback can prevent Googlebot from discovering linked content.

Soft 404 Pages

Pages that return a 200 status code but contain no meaningful content are classified as soft 404s. Google crawls these pages but cannot index them productively. The correct approach is ensuring these pages return proper 404 or 410 status codes, signaling to Googlebot that crawling them is unnecessary.

Redirect Chains

Redirect chains where one URL redirects to another, which redirects to yet another waste crawl budget because each redirect hop requires a separate request. They also dilute link equity through the chain. Consolidating redirect chains into direct single-hop redirects improves both crawl efficiency and link signal transfer.

Thin and Low-Quality Pages

Pages with minimal content that provide no real user value are treated as low quality by Google. Crawling these pages diverts resources from your valuable pages. Regularly auditing your content and removing or improving thin pages frees up crawl budget for pages that matter.

SEOctopus's Crawl Analysis feature crawls your site from Googlebot's perspective, detecting each of these issues and providing prioritized remediation recommendations.

Robots.txt Optimization

Your robots.txt file is the most fundamental tool for crawl budget management. A properly configured robots.txt file directs Googlebot toward valuable pages while blocking access to areas that waste crawling resources.

Areas to Block

You should consider blocking admin and back-office pages, internal search result pages, filter and sort parameter combinations, shopping cart and checkout steps, user profile pages, and print-friendly page versions. These areas consume crawl budget without contributing to your organic search presence.

Important Considerations

Do not block CSS and JavaScript files. Google needs access to these resources to properly render your pages. Blocking them can result in Google seeing a broken version of your site, which negatively affects both crawling and ranking. Always verify that blocked areas do not contain important pages that should be indexed. Test any robots.txt changes before deploying them to production.

SEOctopus's robots.txt checker tool analyzes your existing robots.txt file, identifying misconfigurations, missing directives, and optimization opportunities that could improve your crawl budget utilization.

XML Sitemap Optimization

XML sitemaps are your most direct communication channel with Google about which pages exist on your site and when they were last updated. They play a crucial role in crawl budget optimization by guiding Googlebot to your most important content.

Sitemap Best Practices

Include only canonicalized, indexable pages in your sitemaps. Remove noindexed pages, redirected URLs, and error pages from sitemap files. Keep the lastmod dates accurate and current. Google penalizes sites that use fake or inflated modification dates by reducing trust in sitemap signals. For large sites, segment your sitemaps by content type and manage them through a sitemap index file. Each individual sitemap file should contain no more than 50,000 URLs and remain under 50 MB uncompressed.

Dynamic Sitemap Strategy

For large sites, dynamically generated sitemaps are more effective than static files. Create separate sitemap files for each content category. For example, use products-sitemap.xml for product pages, blog-sitemap.xml for articles, and categories-sitemap.xml for category pages. This segmentation helps you monitor which content types are being crawled effectively and identify areas needing improvement.

URL Parameter Handling

URL parameters, especially on e-commerce sites, are among the largest sources of crawl budget waste. Effective parameter management requires a multi-pronged approach.

For filter and sort parameters, use canonical tags to point all variations back to the primary page. Manage session identifiers and tracking parameters through cookies or JavaScript rather than URL parameters. For pagination, while rel next and rel prev are no longer formally supported by Google, maintaining clean link structures and XML sitemap integration ensures paginated content remains discoverable.

You can use the URL parameter tool in Google Search Console to inform Google about which parameters change content and which do not. However, given the possibility that this tool may be deprecated in the future, technical solutions should always take priority.

Internal Linking Structure and Crawl Depth

Your internal linking architecture directly influences how Googlebot discovers your site and which pages receive crawling priority. Crawl depth refers to the number of clicks required to reach a page from the homepage.

Flat Site Architecture

In an ideal site architecture, important pages should be reachable within three clicks from the homepage. Deep hierarchies make it difficult for Googlebot to discover and prioritize important pages. Breadcrumb navigation, related content sections, category pages, and footer links create an effective internal linking network that reduces crawl depth across the site.

Detecting Orphan Pages

Pages with no internal links pointing to them are classified as orphan pages. These pages can only be discovered through sitemaps and typically receive low crawl priority. Regular site crawls should identify orphan pages so they can be integrated into the internal linking structure or removed if no longer needed.

Link Equity Distribution

Internal links transfer authority between pages. By directing more internal links to your most important pages, you increase both their crawl priority and ranking potential. However, pages overloaded with excessive links dilute the equity passed through each individual link. Build a strategic and purposeful internal linking structure rather than linking to everything from everywhere.

Server Response Time and Crawlability

Server performance has a direct impact on crawl budget. Google reduces crawl rate for sites with high response times, which means fewer pages get crawled within a given period.

Speed Optimization Recommendations

Keep your server response time, known as Time to First Byte or TTFB, below 200 milliseconds. Use a CDN to accelerate static resource delivery. Optimize database queries and eliminate unnecessary operations. Implement server-side caching mechanisms. Adopt HTTP/2 or HTTP/3 protocols to improve connection efficiency. Every millisecond saved in server response time compounds across thousands of crawled pages.

Hosting Infrastructure

Shared hosting plans often cannot handle the crawl demands of large sites. For sites at scale, VPS, dedicated servers, or cloud hosting solutions are recommended. Ensure your server resources remain sufficient even during Googlebot's intensive crawl periods, which can occur unpredictably.

Log File Analysis

Server log analysis provides the most valuable data source for crawl budget optimization. Log files reveal exactly which pages Googlebot visits, how frequently it returns, what status codes it encounters, and its overall crawling patterns.

Insights from Log Analysis

Log analysis provides the list of most frequently crawled pages, identification of uncrawled or rarely crawled pages, the impact of 404 and 5xx errors on crawling behavior, trends and changes in crawl frequency over time, redirect chains followed by Googlebot, and the distribution of mobile versus desktop Googlebot crawls.

Log Analysis Tools

Tools like Screaming Frog Log Analyser, Botify, and Oncrawl provide visualization and analysis of log files. SEOctopus's integrated Crawl Analysis module combines log data with site crawl data to present a unified view of your crawl budget performance.

Pagination and Crawl Budget

In large content archives, product listings, and category pages, pagination can have a significant impact on crawl budget. A category with hundreds of pagination pages can consume substantial crawling resources.

Pagination Optimization Strategies

Add unique and valuable content to each pagination page where possible. Limit pagination depth and use category subdivision to reduce the number of paginated pages needed. For load more and infinite scroll implementations, ensure HTML links exist for Googlebot to discover the content without JavaScript execution. In sitemap files, list the actual content pages rather than the pagination pages themselves. Use component link structures that provide access to the first page, last page, and strategic intermediate pages.

JavaScript Rendering and Crawl Budget

JavaScript-rendered content creates a double impact on crawl budget. Googlebot first crawls the raw HTML and then sends the page to a separate rendering queue for JavaScript execution. This two-stage process requires additional resources and time.

JavaScript-Related Crawl Budget Problems

Links loaded through JavaScript may not be discovered during the initial crawl. Pages using client-side rendering take significantly longer to be indexed. JavaScript errors can prevent pages from rendering at all, meaning their content never gets indexed. Excessively large JavaScript bundles increase rendering time and reduce crawling efficiency for the entire site.

Solution Strategies

Use server-side rendering or static site generation to ensure content is present in the initial HTML response. Ensure critical navigation links exist in the HTML markup without JavaScript dependency. Leverage incremental static regeneration for large sites to serve dynamic content efficiently. Minimize JavaScript bundle sizes to reduce rendering time and improve overall crawlability.

Monitoring Crawl Stats in Search Console

Google Search Console provides the most direct way to monitor your crawl budget performance. The crawl stats report offers comprehensive data including total crawl requests, average response time, types of pages crawled such as HTML images CSS and JavaScript, status code distribution, file type distribution, Googlebot type breakdown between mobile and desktop, and crawl purpose differentiated between discovery and refresh.

How to Interpret Search Console Data

A sudden drop in crawl requests may indicate server issues or robots.txt changes that need investigation. High average response times suggest server performance optimization is needed. A high ratio of 404 errors indicates broken links or deleted pages that should be addressed. If the discovery crawl ratio is low, new content may not be receiving sufficient internal links to attract Googlebot's attention.

Crawl Budget for Large Sites With Over 100K Pages

Websites with more than 100,000 pages require a significantly more strategic approach to crawl budget management than smaller sites.

Prioritization Strategy

Classify pages by revenue potential and traffic value. Increase crawl frequency for your most important pages by directing more internal links to them. Exclude low-value pages from crawling using noindex or robots.txt disallow directives. Manage seasonal campaign pages by removing them from crawl paths during off-seasons and reactivating them before campaign launches.

Technical Infrastructure Recommendations

Serve fast responses to Googlebot using edge caching. Consider dynamic rendering to serve bot traffic with pre-rendered HTML optimized for crawling. Configure hreflang and alternate tags correctly to prevent redundant crawling across multilingual and multi-regional pages. Maintain a clean and consistent URL structure to prevent parameter confusion and duplication.

Periodic Cleanup

Conduct regular site audits to identify pages that are not being indexed, not generating traffic, and not providing value. Either improve these pages, redirect them to relevant alternatives, or remove them entirely. This cleanup process ensures crawl budget flows to your most valuable content.

Noindex vs Nofollow vs Disallow

These three directives serve different purposes and have distinct effects on crawl budget.

Noindex does not prevent crawling but prevents indexing. Googlebot continues to crawl the page and therefore consumes crawl budget. However, links on the page are still followed and link equity is passed through.

Nofollow prevents link equity from being transferred through links. It does not affect whether the page itself is crawled or indexed. Its direct impact on crawl budget is minimal, though it can indirectly influence crawl patterns through its effect on the link graph.

Disallow in robots.txt prevents Googlebot from crawling the specified path entirely. This provides direct crawl budget savings because Googlebot does not visit these pages at all. However, it is important to note that if disallowed pages receive external links, Google may still index them based on anchor text and surrounding context without seeing their actual content.

The most effective strategy is to use disallow for pages you do not want crawled at all and noindex for pages you want crawled but not indexed. Using both directives on the same page is counterproductive because if a page is disallowed, Googlebot cannot access it to read the noindex tag, rendering the noindex directive ineffective.

Frequently Asked Questions

Does crawl budget matter for small websites?

Generally, crawl budget is not a critical concern for sites with fewer than 10,000 pages. Google can crawl sites of this size without difficulty. However, even small sites can experience crawl budget waste if they have technical issues such as thousands of parameter URLs or long redirect chains. Maintaining good technical SEO hygiene is always beneficial regardless of site size.

How can I increase Googlebot's crawl frequency?

While you cannot directly control Google's crawl frequency, several strategies encourage Google to allocate more resources to your site. Publish frequent and high-quality content updates. Keep your XML sitemap current and submit it through Search Console. Improve your server response times. Strengthen your internal linking structure. Earn high-authority backlinks from external sites. These signals collectively indicate to Google that your site deserves more crawling resources.

Why do pages I blocked in robots.txt still appear in the index?

Robots.txt only prevents crawling, not indexing. If other sites link to a blocked page, Google may index it based solely on the URL and anchor text information without seeing its actual content. To definitively prevent indexing, use a noindex meta tag or X-Robots-Tag HTTP header. However, remember that these tags can only be read if the page remains crawlable, creating an inherent tension with robots.txt blocking.

How often should I perform crawl budget optimization?

Crawl budget optimization is not a one-time task. Review Search Console crawl statistics monthly. Conduct comprehensive site audits quarterly to detect newly emerging issues. After major site changes such as migrations, redesigns, or large content updates, immediately perform log analysis to monitor Googlebot's response. SEOctopus automates these monitoring processes for continuous crawl budget management.

Is crawl budget a bigger problem for JavaScript sites?

Yes, JavaScript-heavy sites face additional crawl budget challenges. Googlebot requires extra resources and time to render JavaScript content. Furthermore, the JavaScript rendering queue operates separately from the main crawling queue and processes more slowly. For this reason, using server-side rendering or static site generation is particularly important for JavaScript sites to maintain crawl budget efficiency.

What is the relationship between crawl budget and indexing?

Crawl budget does not directly determine indexing but establishes its prerequisite. A page must be crawled before it can be indexed. When crawl budget is insufficient, important pages may go uncrawled and consequently unindexed. However, being crawled does not guarantee indexing. Google evaluates content quality and may choose not to index pages that fail to meet its quality criteria. Crawl budget optimization ensures Google prioritizes crawling your valuable pages, thereby increasing the likelihood of indexing and improving your organic search visibility.

Can I use disallow and noindex together on the same page?

Using both directives on the same page is counterproductive and should be avoided. If you block a page via robots.txt disallow, Googlebot cannot access the page to read the noindex meta tag. This means the noindex directive becomes ineffective. If you want to prevent crawling, use disallow. If you want to allow crawling but prevent indexing, use noindex. Combining both creates a contradiction that typically results in undesired outcomes including possible phantom indexing based on external signals.